What is Kubernetes?

Kubernetes is a rapidly evolving platform that manages container-based applications and their associated networking and storage components. Kubernetes focuses on the application workloads, not the underlying infrastructure components. Kubernetes provides a declarative approach to deployments, backed by a robust set of APIs for management operations.

You can build and run modern, portable, microservices-based applications, using Kubernetes to orchestrate and manage the availability of the application components. Kubernetes supports both stateless and stateful applications as teams progress through the adoption of microservices-based applications.

As an open platform, Kubernetes allows you to build your applications with your preferred programming language, OS, libraries, or messaging bus. Existing continuous integration and continuous delivery (CI/CD) tools can integrate with Kubernetes to schedule and deploy releases.

Azure Kubernetes Service (AKS) is a PaaS service offered by Azure. AKS provides a managed Kubernetes service that reduces the complexity of deployment and core management tasks, like upgrade coordination. The Azure platform manages the AKS control plane, and you only pay for the AKS nodes that run your applications.

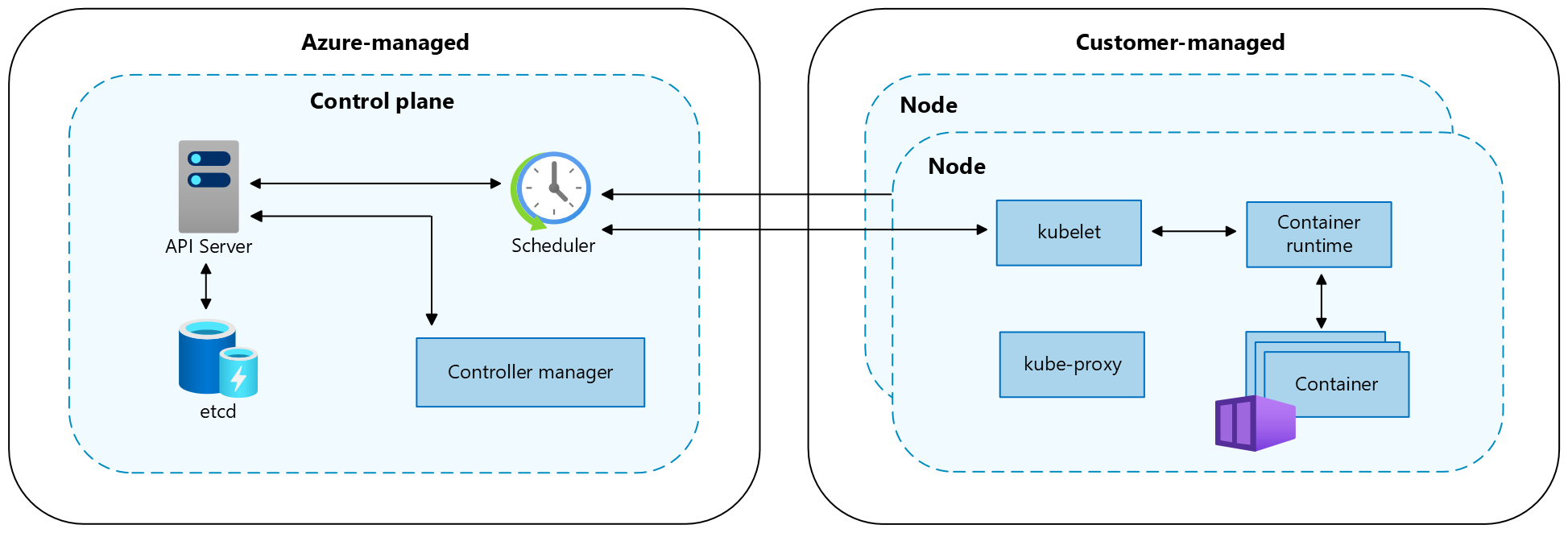

Kubernetes cluster architecture

A Kubernetes cluster is divided into two components:

- Control plane: provides the core Kubernetes services and orchestration of application workloads.

- Nodes: run your application workloads.

Control plane

When you create an Azure Kubernetes cluster, a control plane is automatically created and configured. This control plane is provided at no cost as a managed Azure resource abstracted from the user. You only pay for the nodes attached to the AKS cluster. The control plane and its resources reside only in the region where you created the cluster.

The control plane includes the following core Kubernetes components:

| Component | Description |

|---|---|

| kube-apiserver | The API server is how the underlying Kubernetes APIs are exposed. This component provides the interaction for management tools, such as kubectl or the Kubernetes dashboard. |

| etcd | To maintain the state of your Kubernetes cluster and configuration, the highly available etcd is a key value store within Kubernetes. |

| kube-scheduler | When you create or scale applications, the Scheduler determines what nodes can run the workload and starts them. |

| kube-controller-manager | The Controller Manager oversees a number of smaller Controllers that perform actions such as replicating pods and handling node operations. |

AKS provides a single-tenant control plane, with a dedicated API server, scheduler, etc. You define the number and size of the nodes, and the Azure platform configures the secure communication between the control plane and nodes. Interaction with the control plane occurs through Kubernetes APIs, such as kubectl or the Kubernetes dashboard.

While you don’t need to configure components (like a highly available etcd store) with this managed control plane, you can’t access the control plane directly. Kubernetes control plane and node upgrades are orchestrated through the Azure CLI or Azure portal. To troubleshoot possible issues, you can review the control plane logs through Azure Monitor logs.

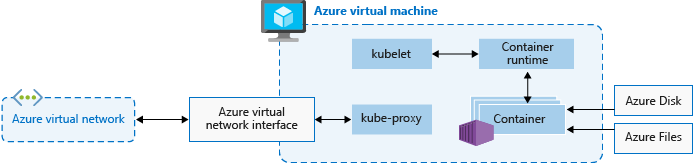

Nodes and node pools

To run your applications and supporting services, you need a Kubernetes node. An AKS cluster has at least one node, an Azure virtual machine (VM) that runs the Kubernetes node components and container runtime.

| Component | Description |

|---|---|

kubelet | The Kubernetes agent that processes the orchestration requests from the control plane along with scheduling and running the requested containers. |

| kube-proxy | Handles virtual networking on each node. The proxy routes network traffic and manages IP addressing for services and pods. |

| container runtime | Allows containerized applications to run and interact with additional resources, such as the virtual network and storage. AKS clusters using Kubernetes version 1.19+ for Linux node pools use containerd as their container runtime. Beginning in Kubernetes version 1.20 for Windows node pools, containerd can be used in preview for the container runtime, but Docker is still the default container runtime. AKS clusters using prior versions of Kubernetes for node pools use Docker as their container runtime. |

The Azure VM size for your nodes defines CPUs, memory, size, and the storage type available (such as high-performance SSD or regular HDD). Plan the node size around whether your applications may require large amounts of CPU and memory or high-performance storage. Scale out the number of nodes in your AKS cluster to meet demand. For more information on scaling, see Scaling options for applications in AKS.

In AKS, the VM image for your cluster’s nodes is based on Ubuntu Linux, Mariner Linux, or Windows Server 2019. When you create an AKS cluster or scale out the number of nodes, the Azure platform automatically creates and configures the requested number of VMs. Agent nodes are billed as standard VMs, so any VM size discounts (including Azure reservations) are automatically applied.

For managed disks, the default disk size and performance will be assigned according to the selected VM SKU and vCPU count. For more information, see Default OS disk sizing.

If you need advanced configuration and control on your Kubernetes node container runtime and OS, you can deploy a self-managed cluster using Cluster API Provider Azure.

Node pools

Nodes of the same configuration are grouped together into node pools. A Kubernetes cluster contains at least one node pool. The initial number of nodes and size are defined when you create an AKS cluster, which creates a default node pool. This default node pool in AKS contains the underlying VMs that run your agent nodes.

To ensure your cluster operates reliably, you should run at least two (2) nodes in the default node pool.

You scale or upgrade an AKS cluster against the default node pool. You can choose to scale or upgrade a specific node pool. For upgrade operations, running containers are scheduled on other nodes in the node pool until all the nodes are successfully upgraded.

For more information about how to use multiple node pools in AKS, see Create and manage multiple node pools for a cluster in AKS.